Segmentation, Mapping, Text, LLMs

MGT 100 Week 2

This version: May 2026 | License: CC BY 4.0 | We use javascript to track readership.

We welcome reuse with attribution. Please share widely.

Segmentation

- Putting the S into STP

Heterogeneity

- A fancy way to say that customers differ, e.g.

- Product needs–usage intensity, frequency, context; loyalty

- Most important dimension of heterogeneity, by far

- This perspective differs from the reading

- Demographics–often overrated as predictors of behavior

- Psychographics–Orientation to Art, Status, Religion, Family, …

- Location

- Experience

- Information

- Attitudes

- Product needs–usage intensity, frequency, context; loyalty

- Differences may predict purchases, wtp, usage, satisfaction, retention, …

Product needs predict behavior far better than demographics. Why might marketers still default to demographic segmentation?

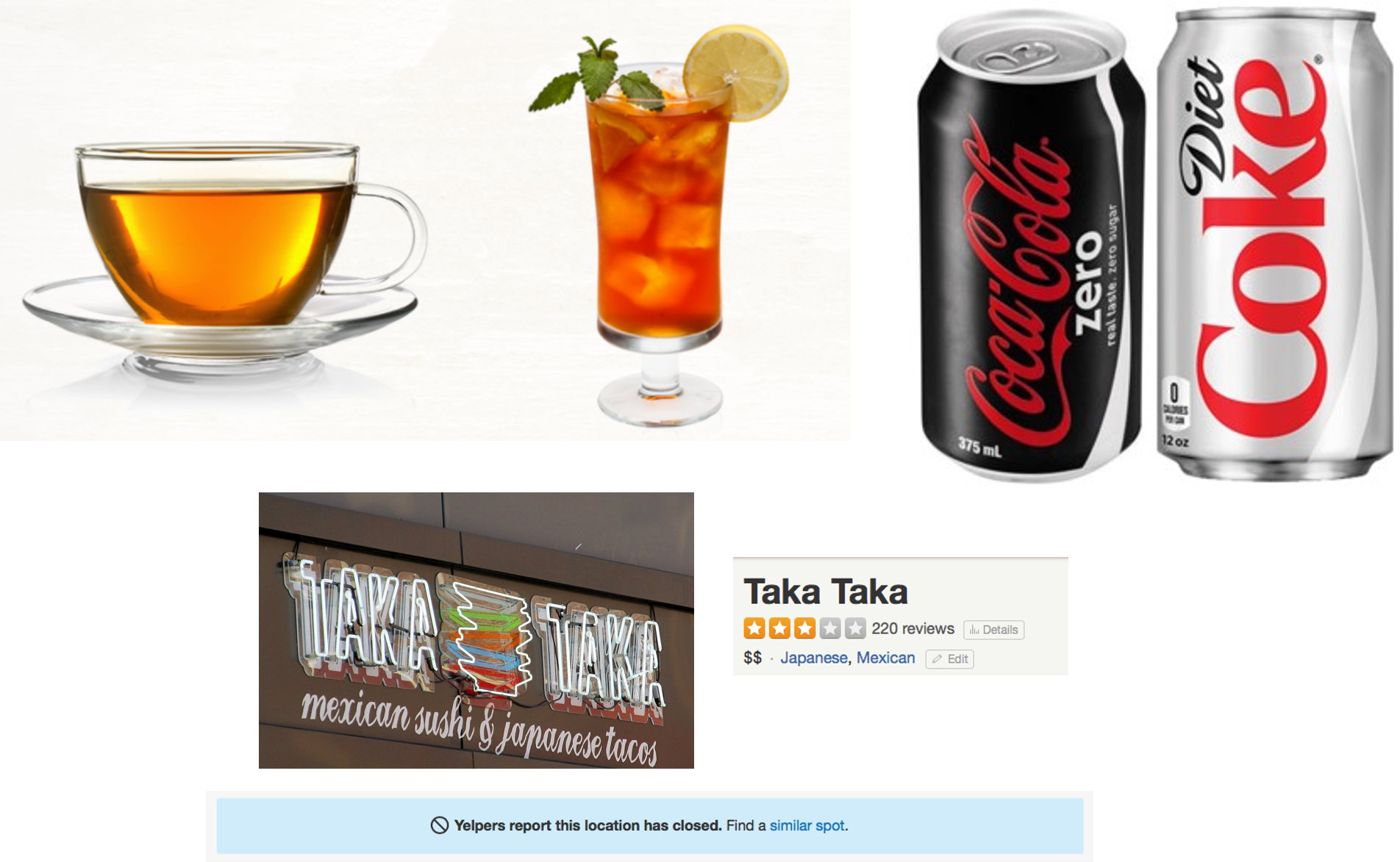

Few people ask for room-temperature tea. Why did Coke introduce Coke Zero when it already had Diet Coke? Sometimes when you try to appeal to everyone, you appeal to nobody. How can you predict how the market will react to your product?

Market Segmentation

- Segments: distinct customer groups with similar attributes within a segment, different attributes between segments

- Fundamental since the 1960s

- Numerous segmentation techniques exist

- Customer Response Profiles embody segments

- B2B segments: customer needs, size, profitability, internal structure

- Segmentation should drive most customer decisions

Measurable

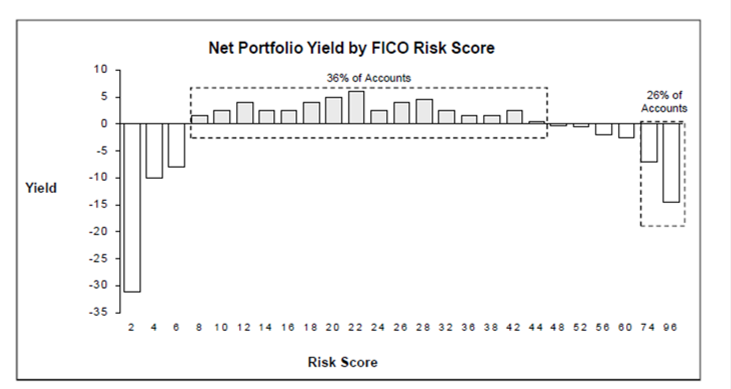

Good segments must be measurable – you need data to identify who belongs to each group. Why do you think those high-FICO accounts are unprofitable?

Substantial

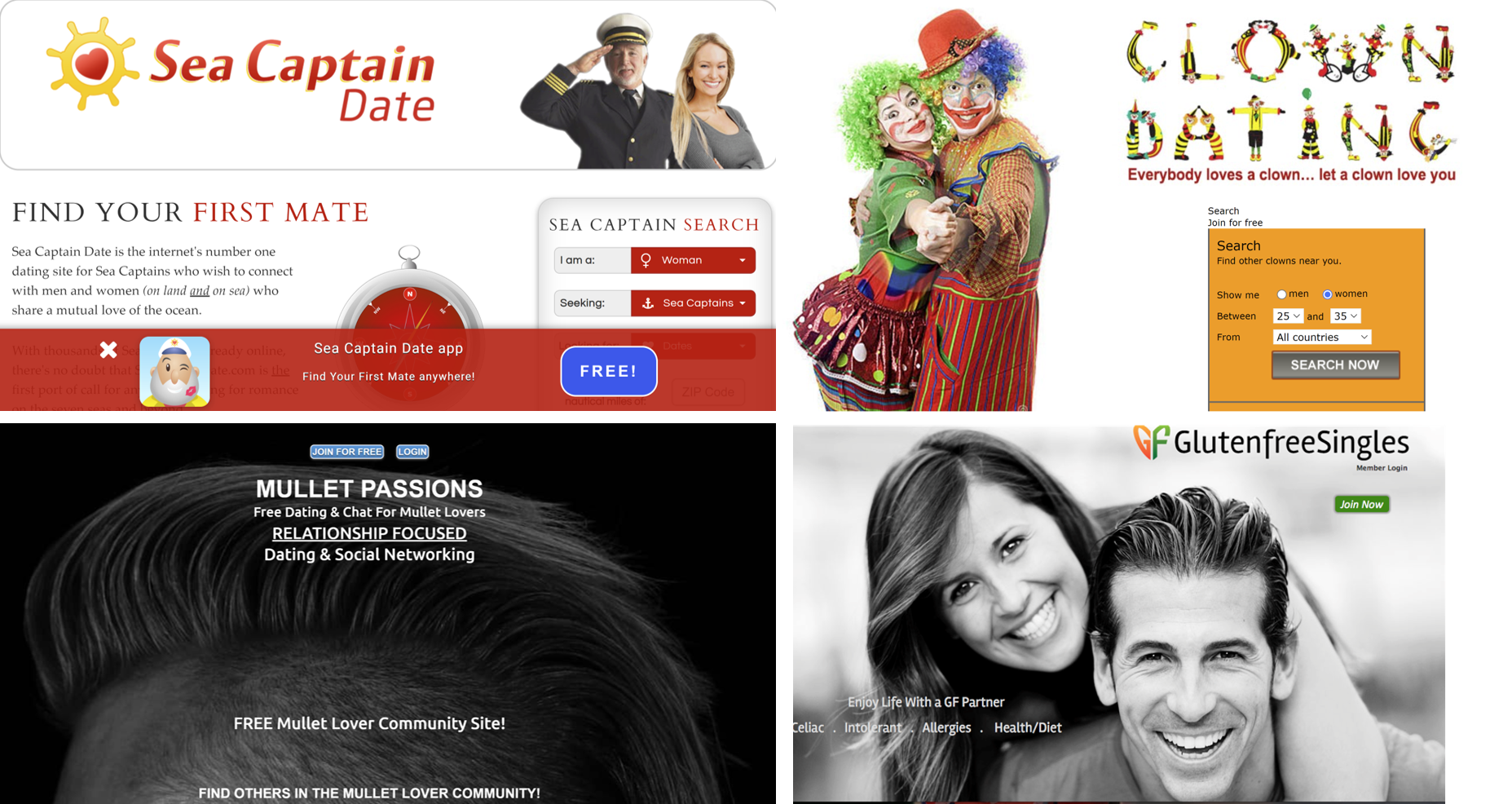

Small segments can sustain profitable niche-focused services, but if you go too small, you can’t sustain operations. Which of these services do you think survived?

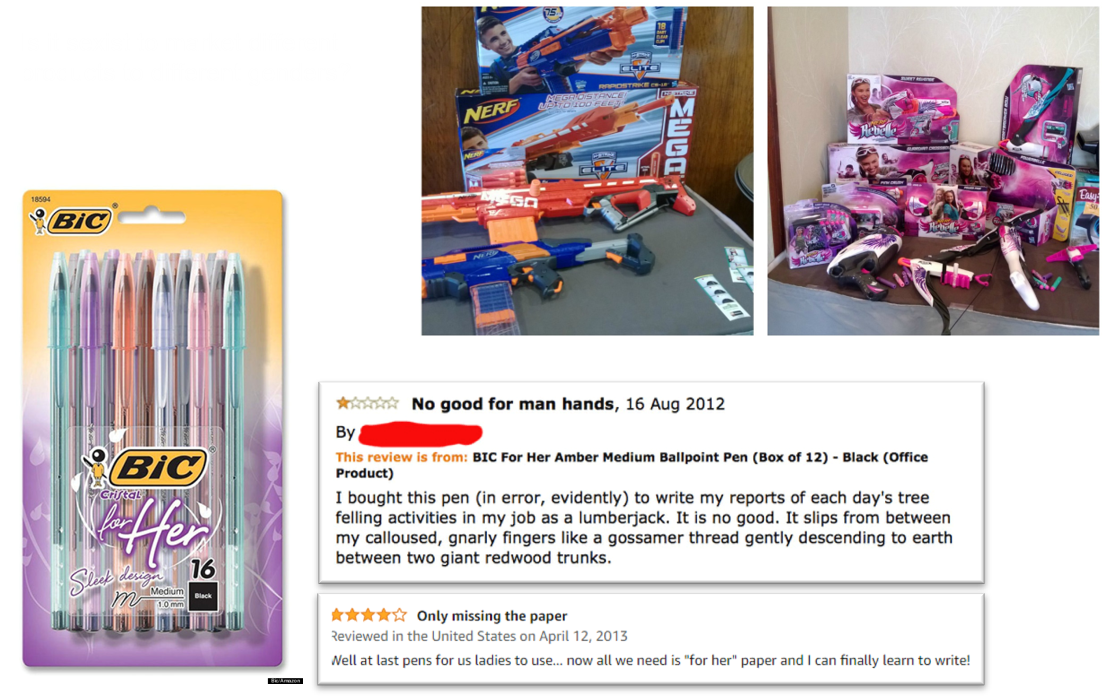

Is segmenting by gender sexist?

Segmenting markets by gender or other demographics may reflect real differences in customer needs, or may reflect a lazy categorization. Sexism or discrimination usually requires some notion of unfairness. Who decides when it’s OK to tailor target products to particular demographics?

We have some high-quality evidence that some products targeted toward women do not cost more than identical products targeted toward men. Gender-based price differences are sometimes conflated with preferences or costs that correlate with gender. Should we ban price differences between products that target different genders?

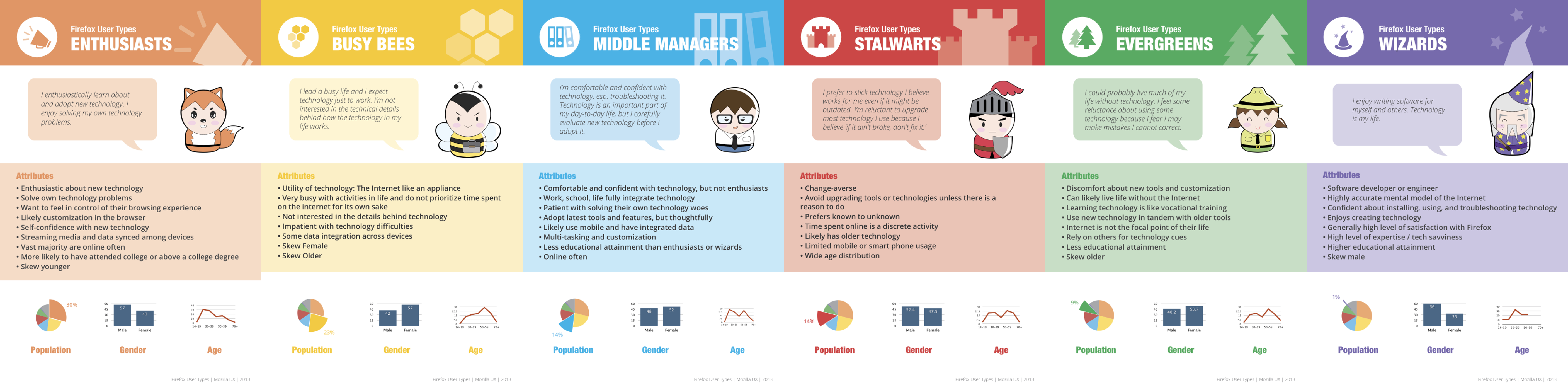

Customer demographics

- In some markets– makeup, diapers, sports, shoes– demographics correlate strongly with behaviors

- In most markets– smartphones, universities, software, cars– demographics correlate weakly with behaviors

- Demographics don’t typically cause purchases, but may predict real differences in customer needs

- Why do we so often overrate demographics as predictors of behavior?

Demographics are easily measurable, visually perceivable, and how many people identify themselves. But what really predicts customer purchases? Why do customers buy things?

Segmentation in Action

- Who does it

- Browser example

- Why we keep it quiet

- Walmart divides its market into 9 segments and targets 4. Which ones?

| Low Price Sensitivity |

Mid Price Sensitivity |

High Price Sensitivity |

|

| Low Income | |||

| Mid Income | |||

| High Income |

If Walmart only targets 4 out of 9 segments, how many segments should your business target?

Press c to draw

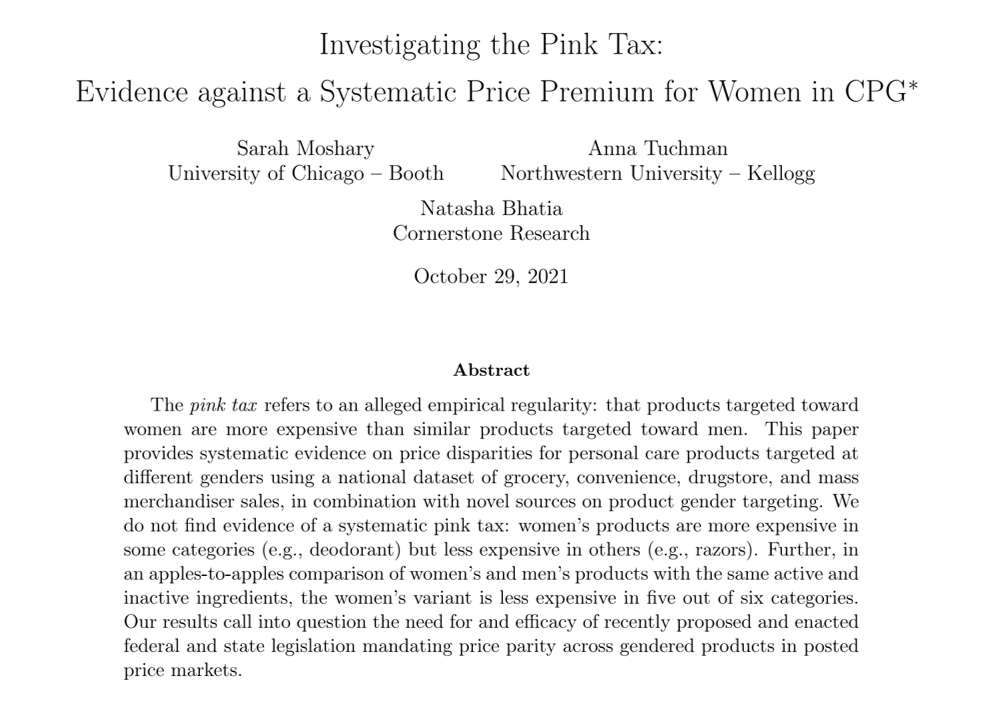

Customer Response Profiles: Firefox Users

- Mozilla’s market research began with customer observations to develop hypotheses; proceeded with in-depth customer interviews to understand motivations for observed behaviors; and concluded with a large survey sample to measure prevalence.

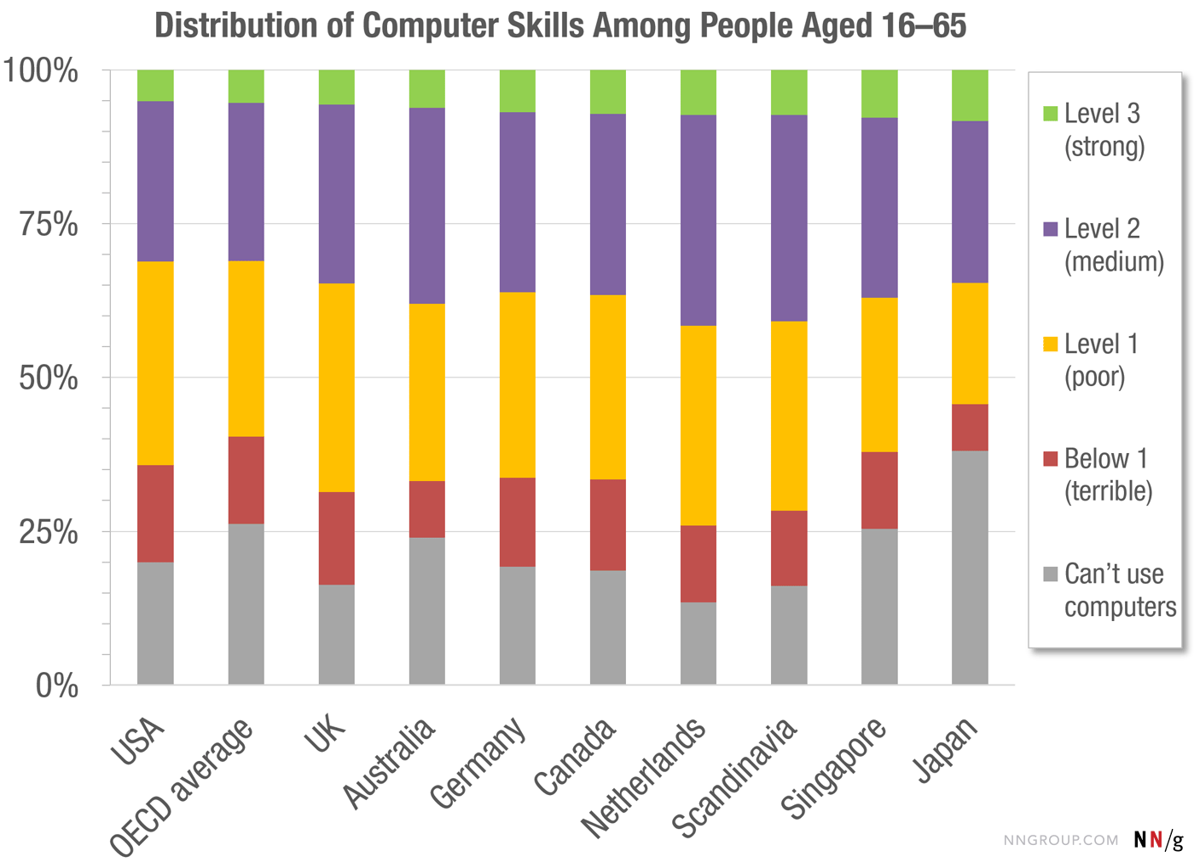

This is a fairly common procedural approach to market research, which usually involves data generation to answer questions about customers (see MGT 108R). What do you notice about segment sizes? Why do the demographic stats follow the user needs?

Why did Mozilla publicize its segmentation scheme? To convince its volunteer developers that typical users are much less technical and interested in simpler features than what those engineers want to build. You must always remember that your customers, by and large, are very different from you, and that you need to rely on data to understand them: your own experience is not reliably predictive.

Firms usually obfuscate targeting

- UO website: “We stock our stores with what we love, calling on our — and our customer’s — interest in contemporary art, music and fashion. …

- “We offer a lifestyle-specific shopping experience for the educated, urban-minded individual in the 18 to 30 year-old range…’’

Firms usually obfuscate targeting

- Earnings call: “Our customer is from traditional homes and advantage, but this offers them the benefit of rebellion…

- Our customer is exposed to new ideas and philosophies. This can be a real involvement and work, or it could be just talk.

- Irreverence and concern can live together. Often products sell well that represent the concerns they have but also can speak to their irreverence.

- Our customer leads a pretty cloistered existence although they deem themselves worldly…they believe that they’re right and they believe that everything that’s happening to them is what’s happening everywhere.

- Our customer is highly involved in mating and dating behavior…one of the primary drives for their spending behavior…they work hard to postpone adulthood… ’’

Internal language shows deep understanding of target customer needs. How would UO customers feel about this characterization?

Customer Data Platform (CDP) - 4 jobs

- Data collection

- Intake data from numerous disparate sources: in-house, direct partners, data brokers, public data

- Data unification or harmonization

- Authenticate and de-duplicate rows and columns

- Data comprehension

- Generate inferences, test hypotheses, make predictions, estimate models

- Covers descriptive, diagnostic & predictive analytics

- Data activation

- Prescriptive analytics: Use data to inform and automate marketing actions

- Automated platforms for transacting & transmitting data: AWS Data Exchange, Databricks Marketplace, Snowflake Marketplace

Previously called “CRM systems,” “data warehouses,” “data lakes.” The names change but the core jobs remain the same. Which of these 4 jobs do you think is hardest to do well?

- Suppose we segment the smartphone market according to each customer’s desired brand.

- Is this a good approach?

How to pick attributes?

- We want to segment based on attributes that drive sales, profit, retention. But how?

- Theory, experience

- Market research

- Customer database

- Consult customer experts (salespeople)

- Find out what other firms are doing

- Let sales data pick for us (het. logit)

How can we find out how our competitors are segmenting the market?

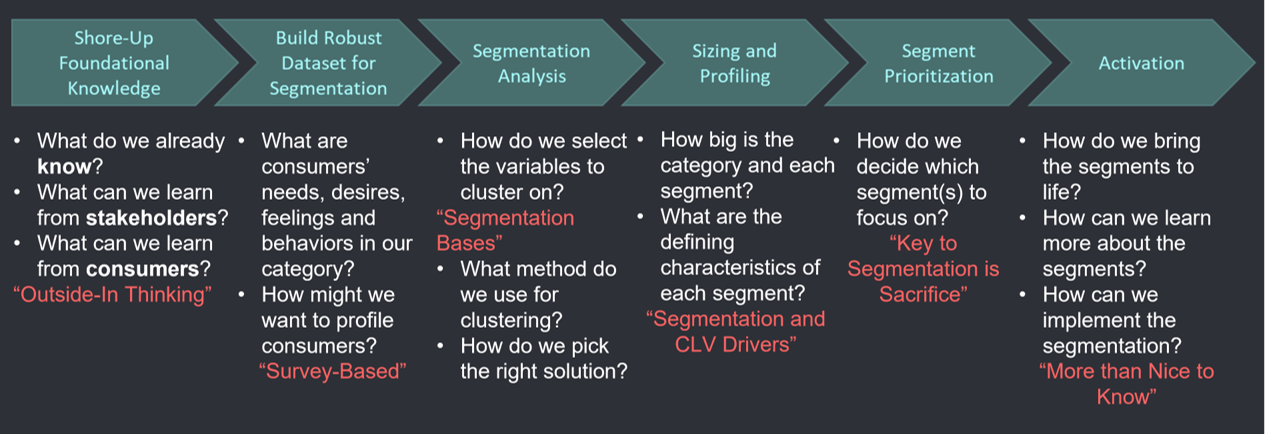

Segmentation in Practice

This process illustrates how analytics techniques are often most useful when integrated within cross-functional teams, enabling the domain experts and business users to collaborate on the outputs.

K-Means

- Simple, elegant approach to define \(k=1,\ldots,K\) segments

- Main idea: Choose \(K\) centroids \(\{C_1, ..., C_K\}\) to minimize total within-segment variation:

\[ \min \sum_{k=1}^K W(C_k)\]

- where \(W(C_k)\) measures variation among customers assigned to segment \(k\)

K-Means is an early unsupervised ML algorithm that categorizes objects without known truth. Many alternative approaches exist. K-Means formalizes an intuitive idea: find K groups that maximize internal similarity. How does average internal similarity change with K?

K-Means

- Most common \(W(C_k)\) function is Euclidean distance

- Given a set of \(i \in I_k\) customers in segment \(k\), each with \(p=1,...,P\) measured attributes \(x_{ip}\),

\[ W(C_k)=\sqrt {\sum_{i \in I_k} \sum_{p} (x_{ip}-\bar{x}_{kp})^2}\]

where \(\bar{x}_{kp}\) is the average of \(\bar{x}_{ip}\) for all \(i \in I_k\), and the centroid is \(C_k=(\bar{x}_{k1}, ..., \bar{x}_{kP})\)

Next, we talk about how we get the \(I_k\) segment membership sets.

K-Means Algorithm

- How do we assign customers to segments?

- There are nearly \(K^n\) ways to partition \(n\) obs into \(K\) clusters

- Happily, a simple algorithm finds a local optimum:

- Randomly choose \(K\) centroids

- Assign every customer to nearest centroid

- Compute new centroids based on customer assignments

- Iterate 2-3 until convergence

- (Optional) Repeat 1-4 for many random centroids

- You will run this in Week 2 script. Let’s illustrate it in class

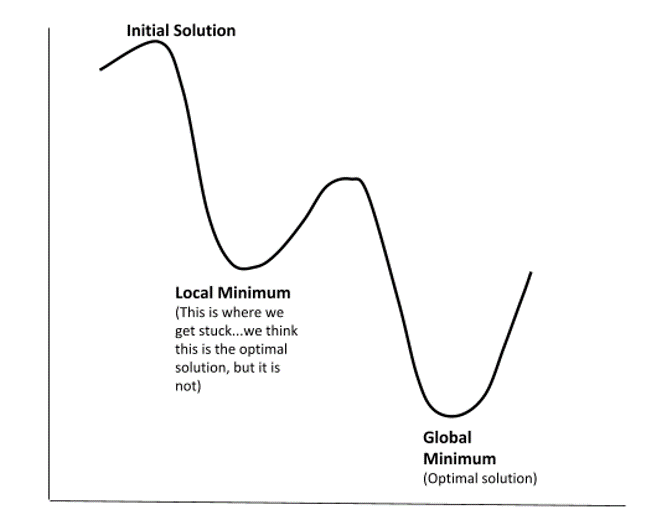

We usually use ‘hillclimbers’ to optimize numeric functions. Imagine I asked you to find the highest point on campus; how? What if I asked you to find the highest point on Earth?

\(W(C_k)\) is not globally convex, so we can’t guarantee a global minimum. Thus, we pick many starting points, and see which offers the lowest \(W(C_k)\).

Note: Some algos promise to find global minimum, but this is only provable for a globally convex function.

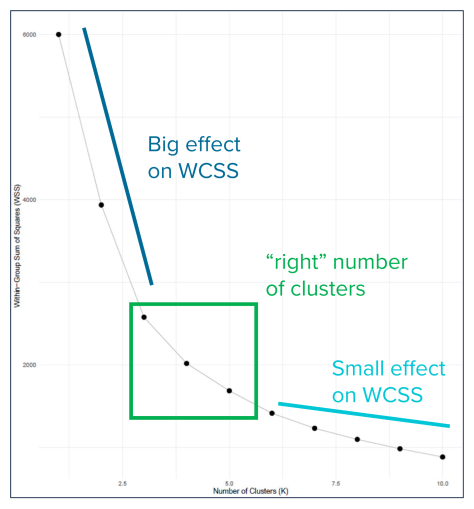

Ways to pick K

- “Scree Plot” or “Elbow method”: showing how quickly \(W(C_k)\) decreases with \(K\). Can balance accuracy with parsimony, but may be haphazard

- Internal Indices: e.g., run K-Means within distinct data partitions and then quantify how centroid stability changes with \(K\)

- External Indices: compare clustering algorithm results to an external dataset or some source of known truth

K-means is a simple clustering algorithm and may perform poorly when data exhibit particular properties. There are many, many more sophisticated approaches.

Market Mapping

- Positioning in attribute space

- Economic theories of differentiation: Vertical, horizontal

- PCA & Perceptual maps

Marketing strategy

- Segmentation: How do customers differ

- Targeting: Which segments do we seek to attract and serve

- Positioning

- What value proposition do we present

- How do our product’s objective attributes compare to competitors

- Where do customers perceive us to be

- How do we want to influence consumer perceptions

- Market mapping enables Positioning

The idea of Positioning was adapted from military battlefield tactics: Where are our infantry? Where is the enemy? Which troops do we direct where to win? Market mapping enables quantifiable and evaluable strategic decisions.

Market Maps

- Market maps use customer data to depict competitive situations. Why?

- Understand brand/product positions in the market

- Track changes

- Identify new products or features to develop

- Understand competitor imitation/differentiation decisions

- Evaluate results of recent tactics

- Cross-selling, advertising, identifying complements or substitutes, bundles…

- We often lack ground truth data

- Using a single map to set strategy is risky

- Repeated mapping builds confidence (“Movies, not pictures”)

- Many large brands do this regularly

Product Differentiation

Vertical (Quality)

- Attributes where more is better, all else constant

- Efficacy, e.g. CPU speed or horsepower

- Efficiency, e.g. power consumption

- Input good quality (e.g. clothes, food)

- Important: not everyone buys the better option (why not?)

Horizontal (Fit or Match)

- Attributes w heterogeneous valuations

- Physical location

- Familiarity, e.g. what you grew up with

- Taste, e.g. sweetness or umami

- Brand image, e.g. Tide, Jif, Coca-Cola

- Complements, e.g. headphones or charging cables

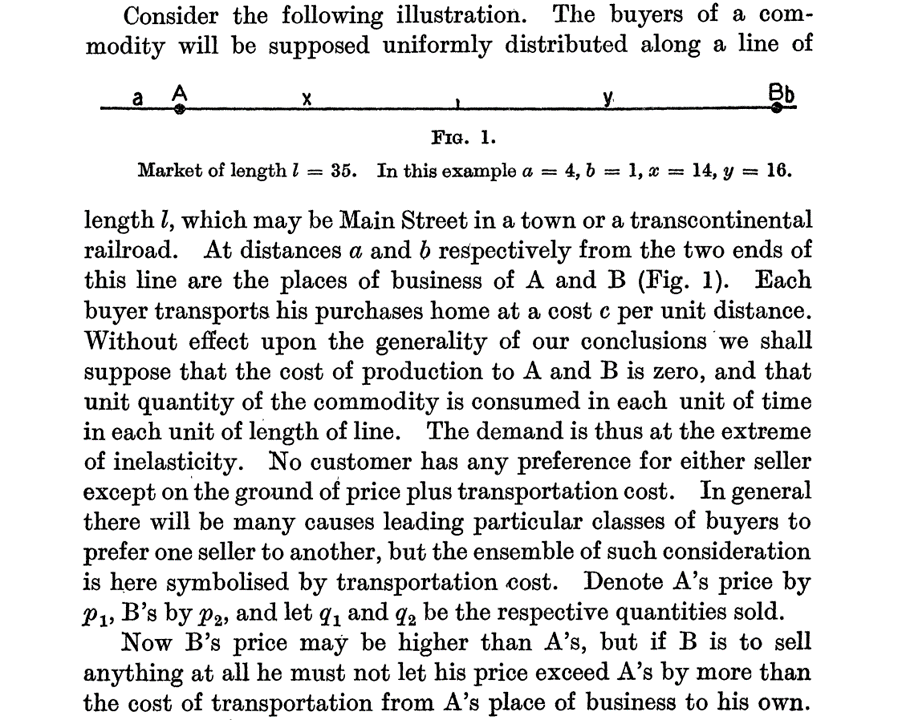

Hotelling (1929)

Hotelling argued persuasively that sellers of a commodity could generate economic profits, simply because consumer transportation costs generate local market power. What is a “marginal consumer”?

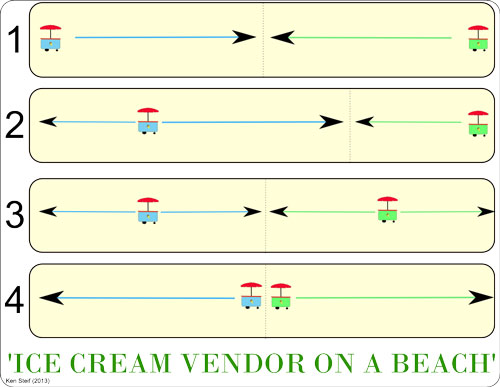

Ice cream vendors

What happens when an ice cream vendor moves toward her competitor?

Median voter theorem

A famous theoretical application of the Hotelling line predicts that two competing political parties may adopt similar positions, if both try to appeal to the same median voter, even if nearly every other voter might prefer a different position.

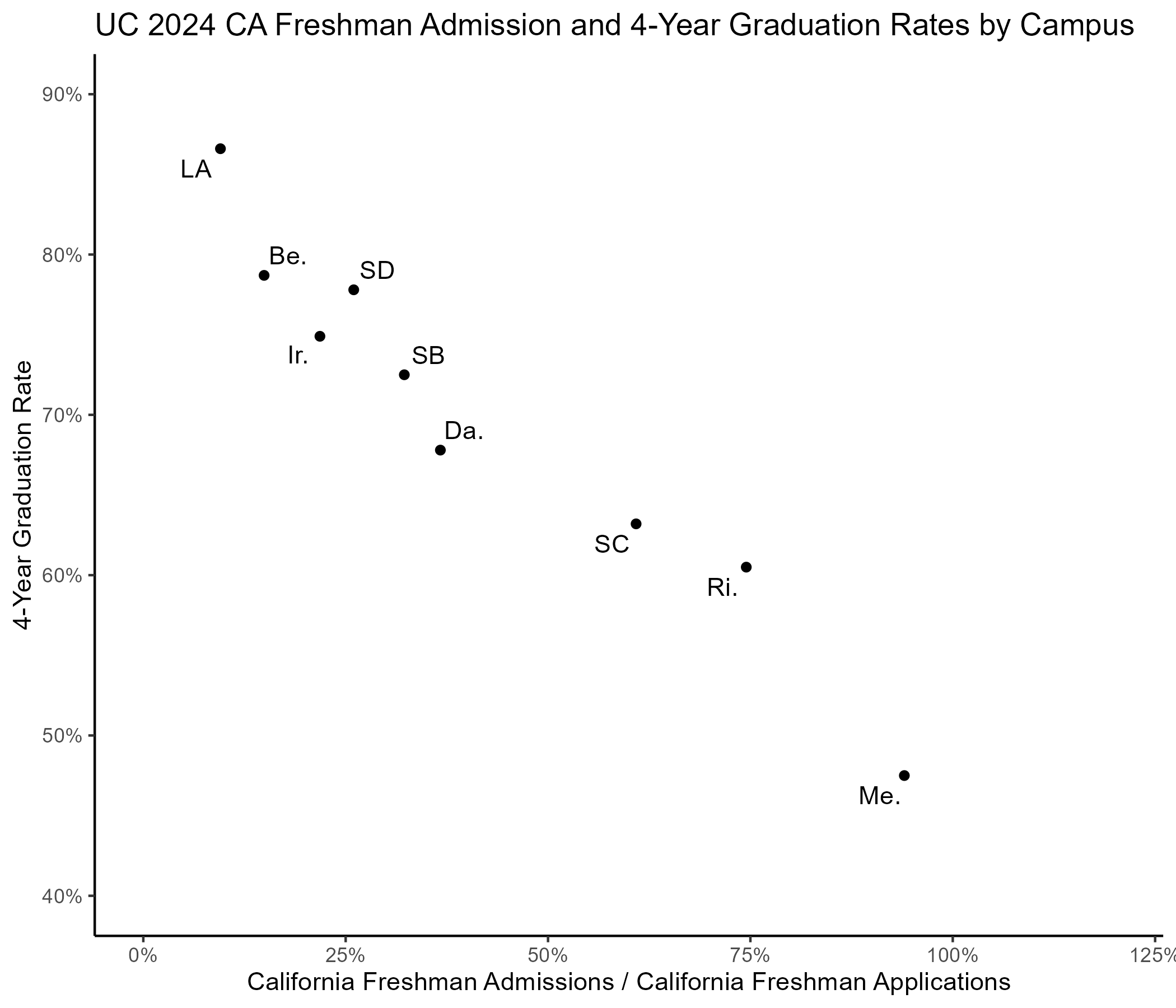

- Case study: Suppose you are the UCSD Chancellor, tasked with increasing in-state freshman enrollments

- You want to map UC campuses in the market for California freshman applicants

- You posit that selectivity and time-to-degree matter most

- Students want to connect with smart students

- Students want to graduate on time

This two-attribute restriction is simply to enable graphing. What other attributes might matter for university choice?

LA vs. Berkeley: Is that ordering right? Market maps are only as good as the data and attributes we choose.

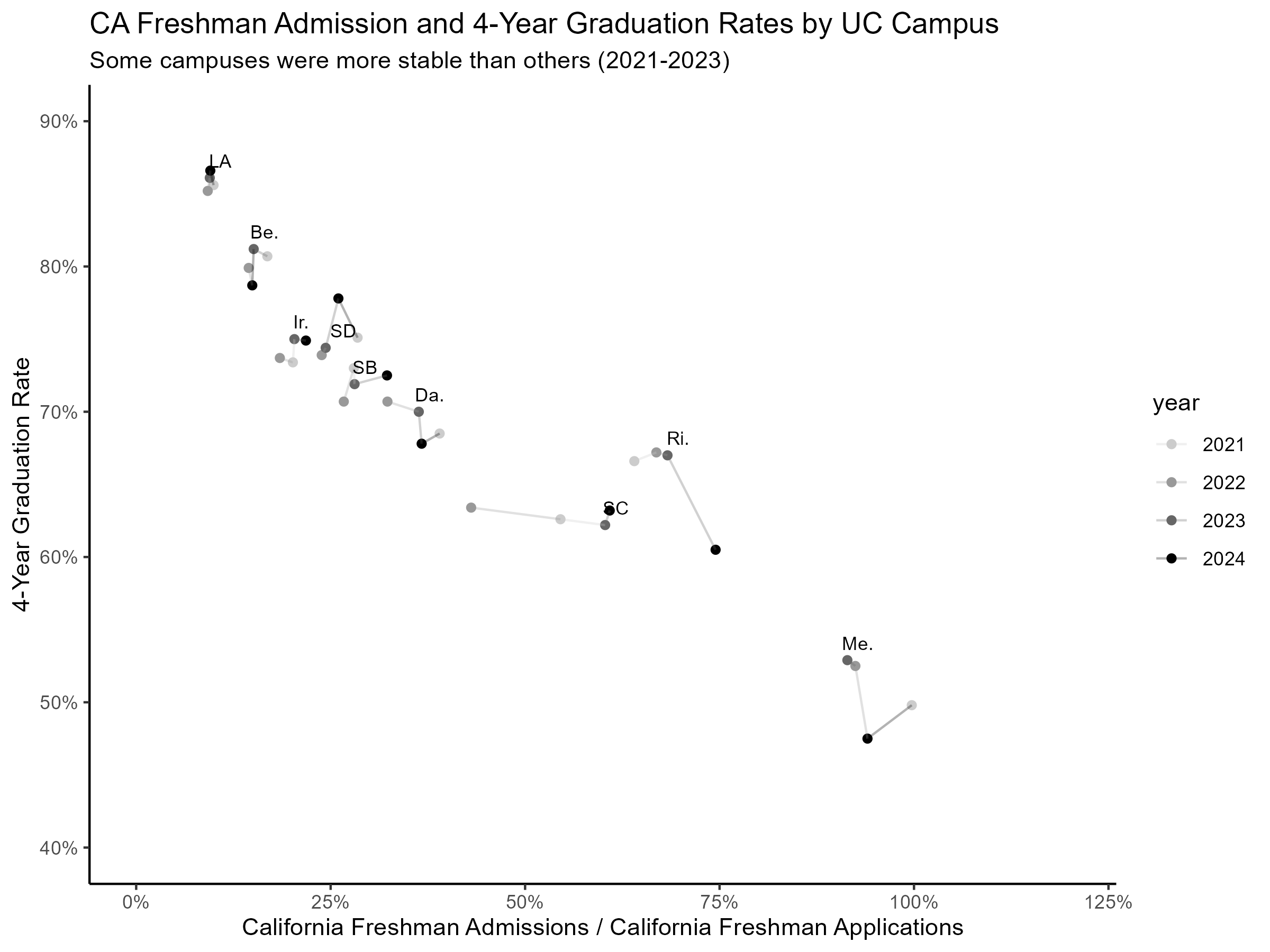

“Movies, not pictures” – tracking positions over time reveals trends that a single snapshot might miss. What changes do you notice?

What if there are too many product attributes to graph?

- Enter Principal Components Analysis

- Powerful way to summarize data

- Projects high-dimensional data into a lower dimensional space

- Designed to minimize information loss during compression

- Pearson (1901) invented; Hotelling rediscovered (1933 & 36)

PCA facilitates market mapping by optimally compressing high-dimensional product attribute spaces to graphable lower-dimensional spaces.

Principal Components Analysis (PCA)

- Store \(K\) continuous attributes for \(J>K\) products in \(X\), a \(J\times K\) matrix

- Consider \(X\) a \(K\)-dimensional space containing \(J\) points

- Calculate \(X'X\), a \(K \times K\) covariance matrix of the attributes

- 1st \(n\) eigenvectors of the attribute covariance matrix give unit vectors to map products in \(n\)-dimensional space

- We’ll use first 1 or 2 eigenvectors for visualization

We customarily rank-order the principal components in descending order of variance explained, so the first is always the most important, second is second-most important, etc. What is the max number of principal components possible in a K-dimensional space?

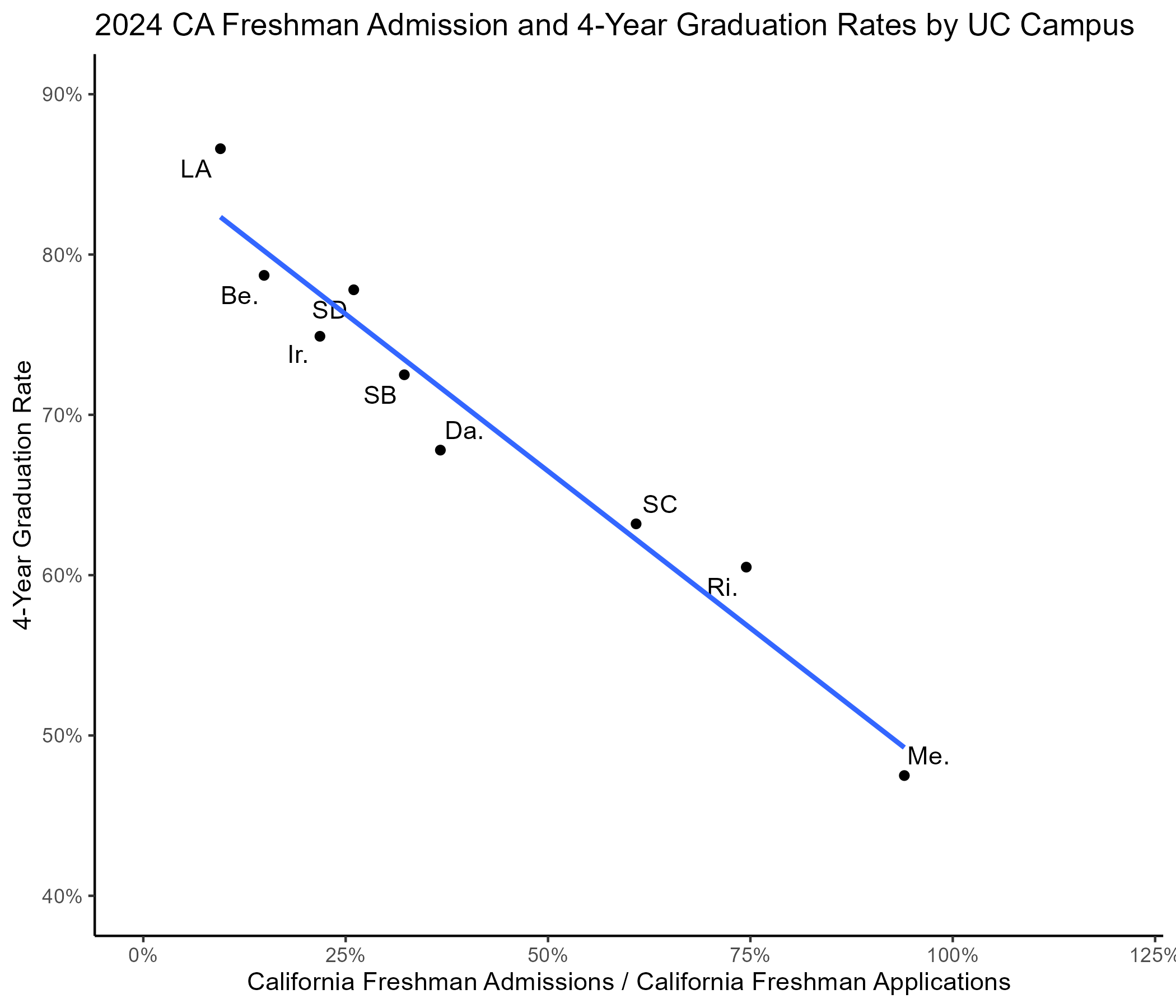

Project each datapoint orthogonally onto the best-fit line (R-sq = .86). This is the core geometric idea behind PCA – finding the direction that explains the most variation in the data.

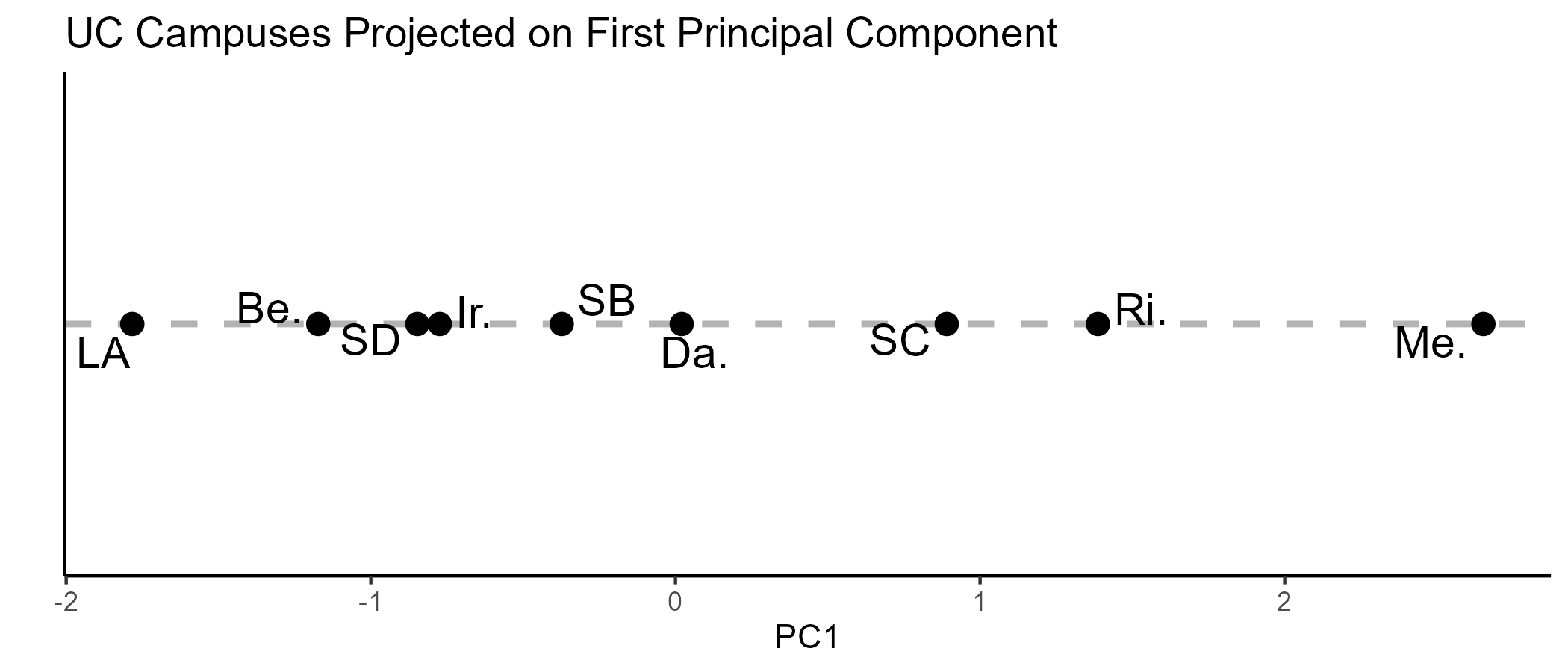

Now, just graph the 1-dim line with the original points projected onto it. Do the relative conclusions hold up? What does PC1 mean? How much information did we lose? Note, these are standardized data; that’s why some relative positions change. Now imagine projecting from 20-dimensions down to 2.

PCA FAQ

- How do I interpret the principal components?

- Principal components are the “new axes” for the newly-compressed space. Each is a linear combination of all of the original space’s axes

- What are the main assumptions of PCA?

- Variables are continuous and linearly related

- Principal components that explain the most variation matter most

- How do I choose the # of principal components?

- Business criteria: Usually 1 or 2 if you mainly want to visualize the data. When doing something more sophisticated, consider how trading off compression vs. data loss affects your objective

- Statistical criteria: Cume variance explained, scree plot, eigenvalue > 1

- What are some similar tools to PCA?

- Factor analysis, linear discriminant analysis, correspondence analysis…

Key PCA choices: Which variables to include, how many PCs to keep, how to interpret locations in space. What would you do if the first component explains only 30% of variance?

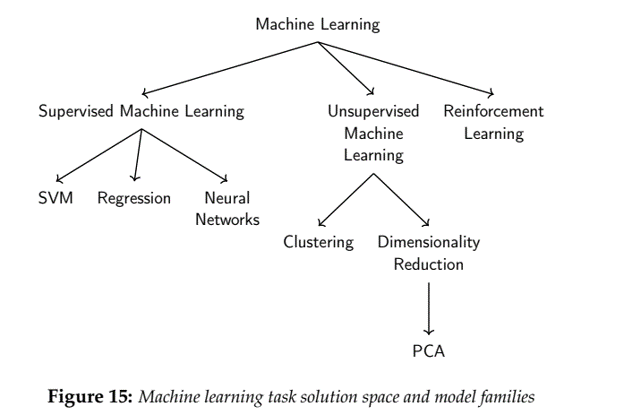

How does PCA relate to K-means?

- K-Means identifies clusters within a dataset

- K-Means augments a dataset by identifying similarities within it

- K-Means never discards data

- PCA combines data dimensions to condense data with minimal information loss

- PCA optimally reduces data dimensionality

- PCA facilitates visual interpretation but does not identify similarities

- Both are unsupervised ML algos

- Both have “tuning parameters” (e.g. # segments, # principal components)

- They serve different purposes & can be used together

- E.g. run PCA to first compress large data, then K-Means to identify similar points

- Or, K-Means to identify clusters, then PCA to visualize centroids in 2D space

PCA compresses; K-Means groups. They complement each other well: compress first to remove noise, then cluster, or cluster first then compress to visualize. Why does the order matter?

Conceptual organization

Understanding the landscape of ML algorithms helps you choose the right tool for the job. Where does K-Means fit?

Mapping Practicalities

- How to measure intangible attributes like trust?

- Survey consumers, e.g. “How much do you trust this brand?”

- What if we don’t know, or can’t measure, the most important attributes?

- Multidimensional scaling

- How should we weigh attributes?

Marketing research measures subjective perceptions. Demand modeling can help weigh attributes.

Multidimensional Scaling

- Suppose you can measure product similarity

- For \(J\) products, populate the \(J\times J\) matrix of similarity scores

- With J brands, we have J points in J dimensions. Each dimension j indicates similarity to brand j. PCA can project J dimensions into 2D for plotting

- Use PCA to reduce to a lower-dimensional space

- Pro: We don’t need to predefine attributes

- Con: Axes can be hard to interpret and validate

- MDS Intuition

- With a ruler and map, measure distances between 20 US cities (“similarity”)

- Record distances in a 20x20 matrix: PCA into 2D should recreate the map

- But, we don’t usually know the map we are recreating, so we look for ground-truth comparisons to indicate credibility and reliability

Applications: political candidate positioning, personality trait perception, brand/product perception.

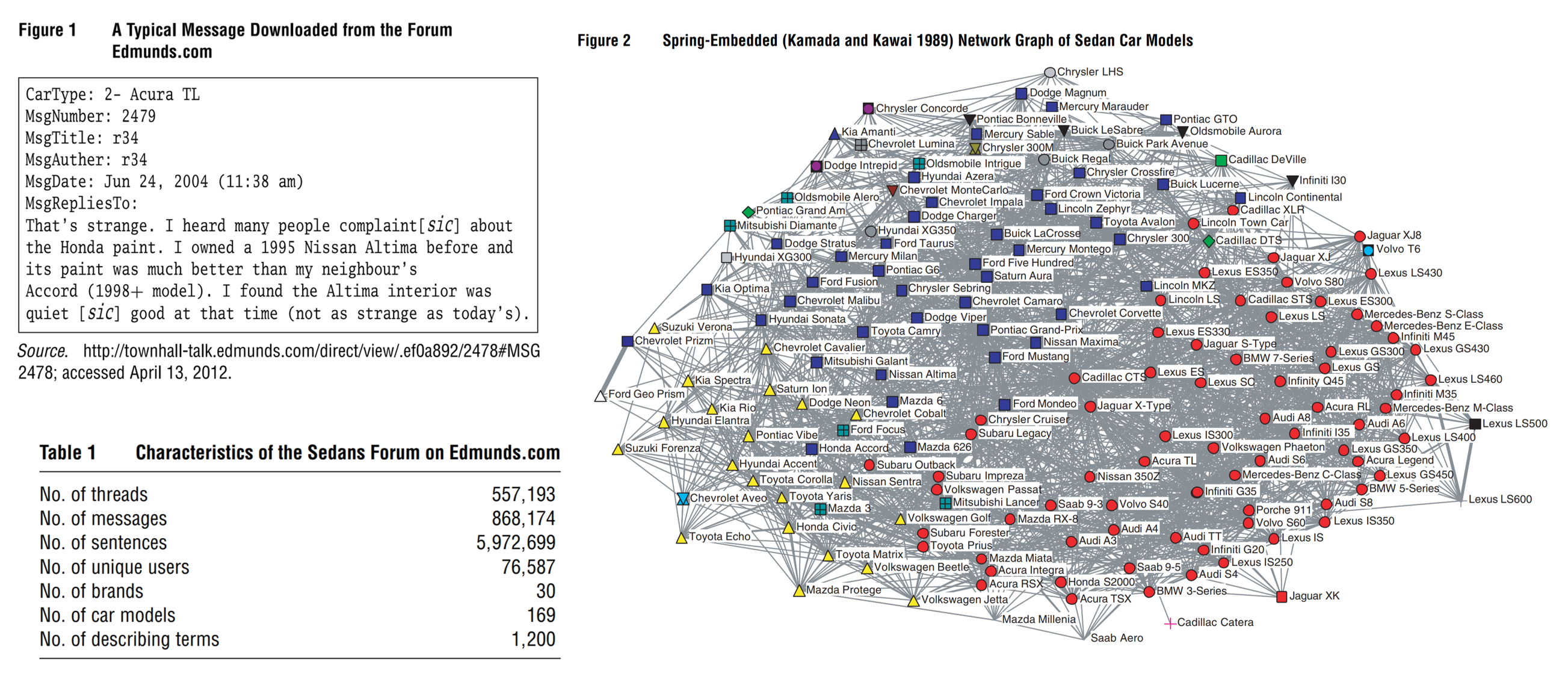

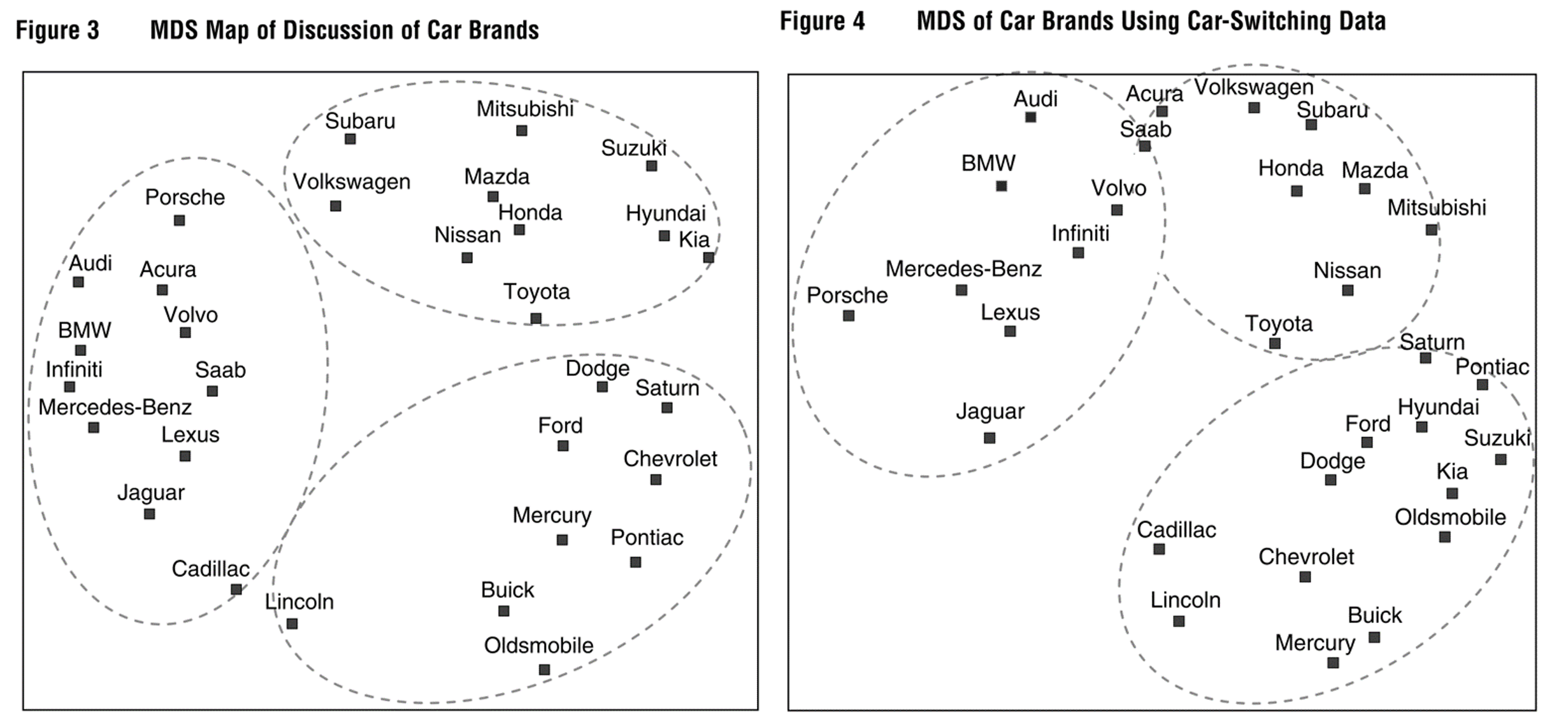

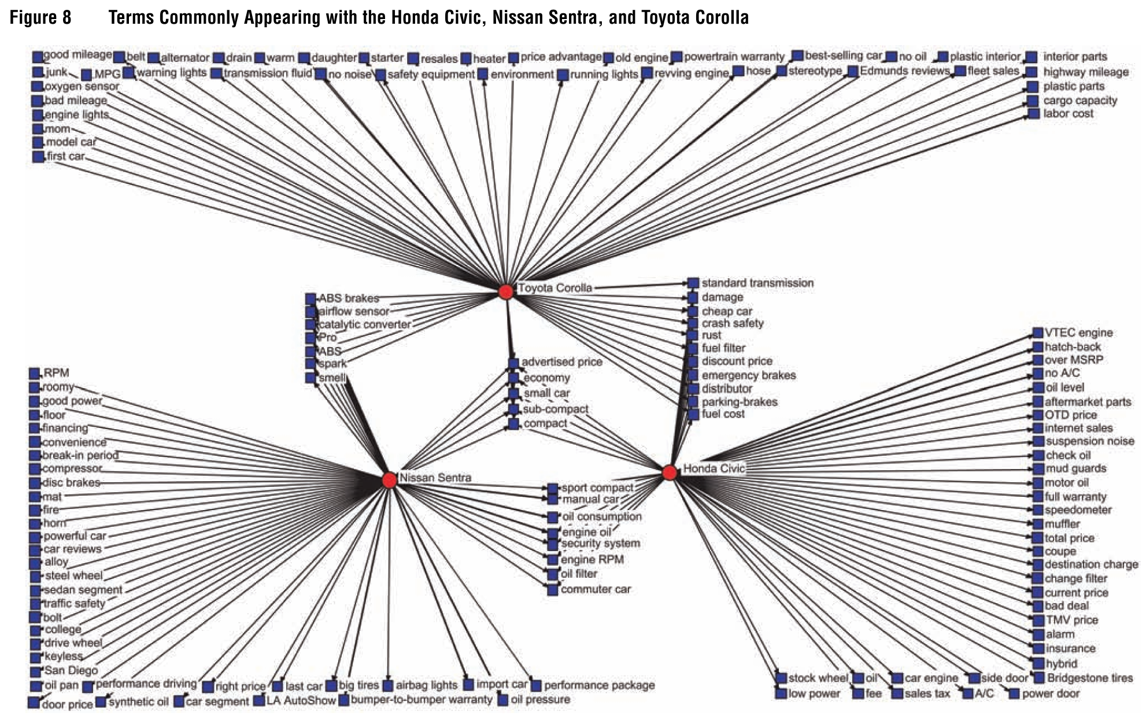

Example: Netzer et al. (2012)

Netzer et al. pioneered using text data from online car reviews to construct market maps. Brand similarity rankings came from organic pairwise comparisons within text reviews. How does this approach compare to marketing research surveys?

The map on the left is based on co-occurrence of car brands within text reviews; the map on the right is based on switching data from JD Power. The map clearly shows distinct submarkets. How do these two data sources compare? Comparisons like this are called “convergent validity.”

The authors also showed how to relate MDS results to attribute space. Notice that “attributes” are N-grams of words. Which features do consumers use to compare these brands?

How to weigh product attributes?

- Demand modeling uses product attributes and prices to explain customer purchases

- Heterogeneous demand modeling uses product attributes, prices and customer attributes to explain purchases

- “Revealed preferences”: Demand models explain observed choices in uncontrolled market environments

How does demand modeling differ from PCA in choosing product attribute weights?

Text data

- The Challenge

- Embeddings

- Reinforcement Learning

- LLMs: How do they work

The Challenge

- Suppose an English speaker knows \(n\) words, say \(n=10,000\)

- How many unique strings of \(N\) words can they generate?

- \(N=1\): \(10{,}000\)

- \(N=2\): \(10{,}000^2=100{,}000{,}000\)

- \(N=3\): \(10{,}000^3=1{,}000{,}000{,}000{,}000=1\) Trillion

- \(N=4\): \(10{,}000^4=10^{16}\)

- \(N=5\): \(10{,}000^5=10^{20}\)

- \(N=6\): \(10{,}000^6=10^{24}=1\) Trillion Trillions

- ….

- Why do we make kids learn proper grammar?

- Average formal written English sentence is ~15 words

Grammar narrows down the set of unique sentences a person can generate, making prediction easier and enabling mutual understanding. The combinatorial explosion of language is a core challenge for any text analysis method.

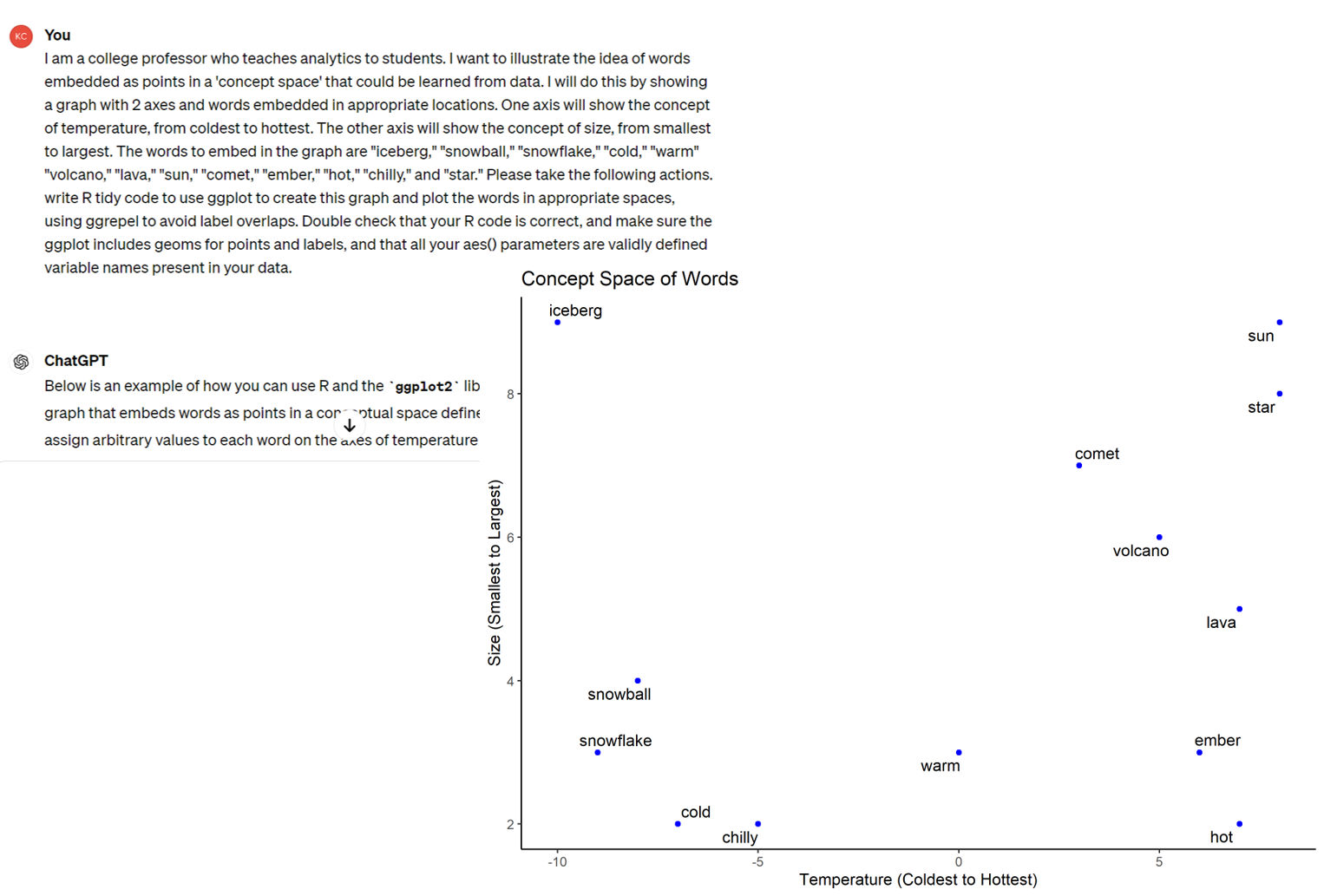

Embeddings

- Represent words as vectors in high-dim space. Really, “tokens,” but assume words==tokens for simplicity

- Assume \(W\) words, \(A<W\) abstract concepts. Assume we have all text data from all history. Each sentence is a point in \(W\)-dimensional space, with each coordinate \(w=1,...,W\) indicating a count of word \(w\) within the sentence

- We could run PCA to reduce from \(W\) to \(A\) dimensions. We have now encoded every sentence as a point in continuous A-space using a ‘bag-of-words’ approach

Embeddings connect text analysis to the PCA and dimensionality reduction ideas we just covered. Words become points in a continuous space, enabling mathematical operations on language. How does this compare to what we did with product attributes?

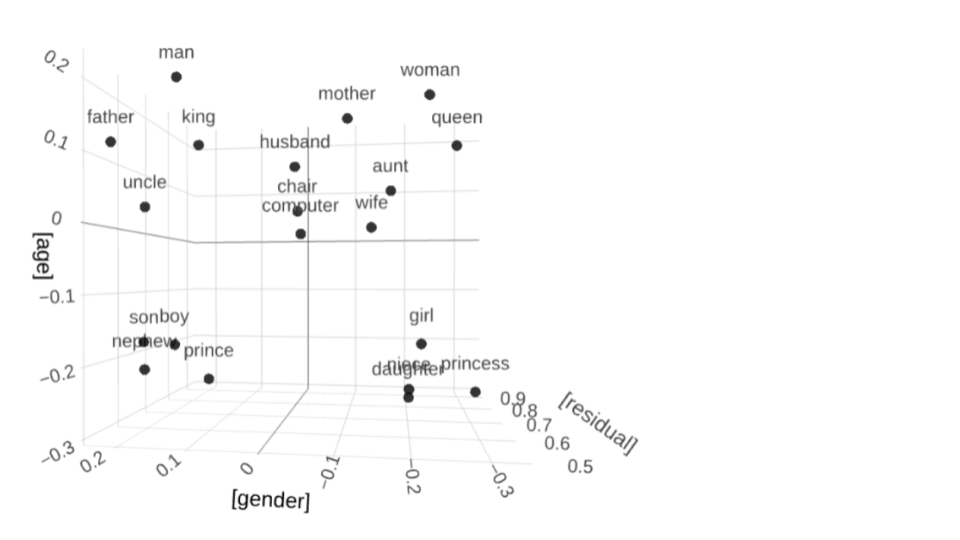

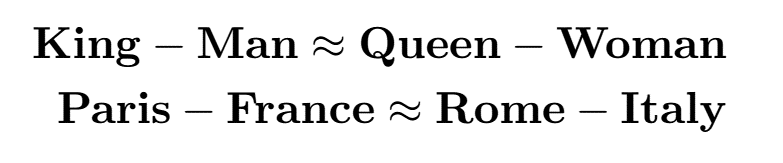

Cool things about embeddings

- We can do math using words!

Other classics include dollar - USA + UK ≈ pound ; Google - search + social ≈ Facebook. Triton - UCSD + UCLA ≈ ____ ?

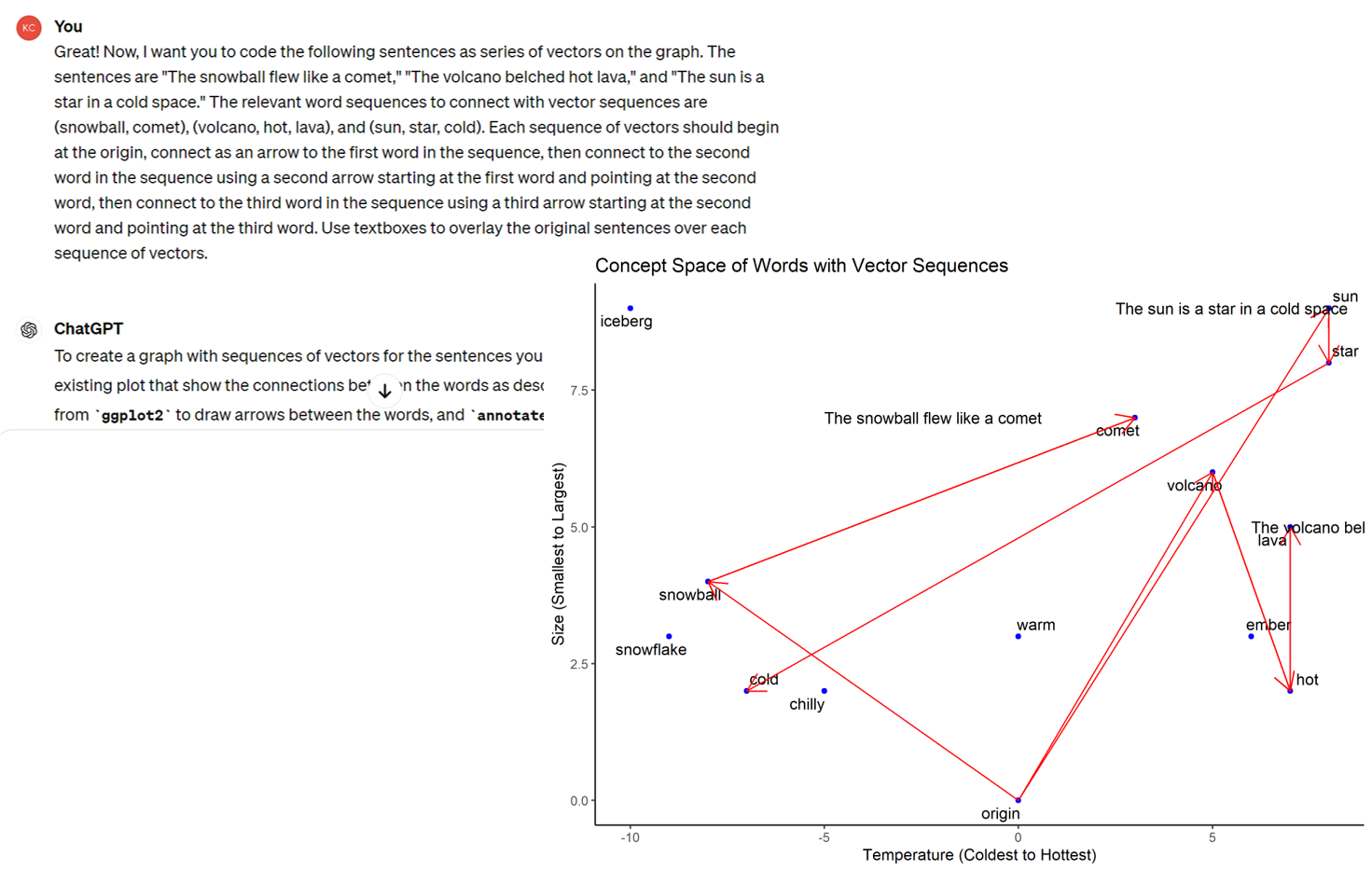

Suppose we had many many sentences, and defined 13 binary attributes for the presence of each of these 13 words. We could run PCA to reduce that 13-dimensional word space to a 2-dimensional concept space. Suppose we were to add a third dimension; what might it represent?

We could visualize each sentence within the concept space as a sequence of points. We could use this sequence data to train an autoregressive model to predict each sentence’s next point given the sequence’s history up to that point. What would that model commonly be called?

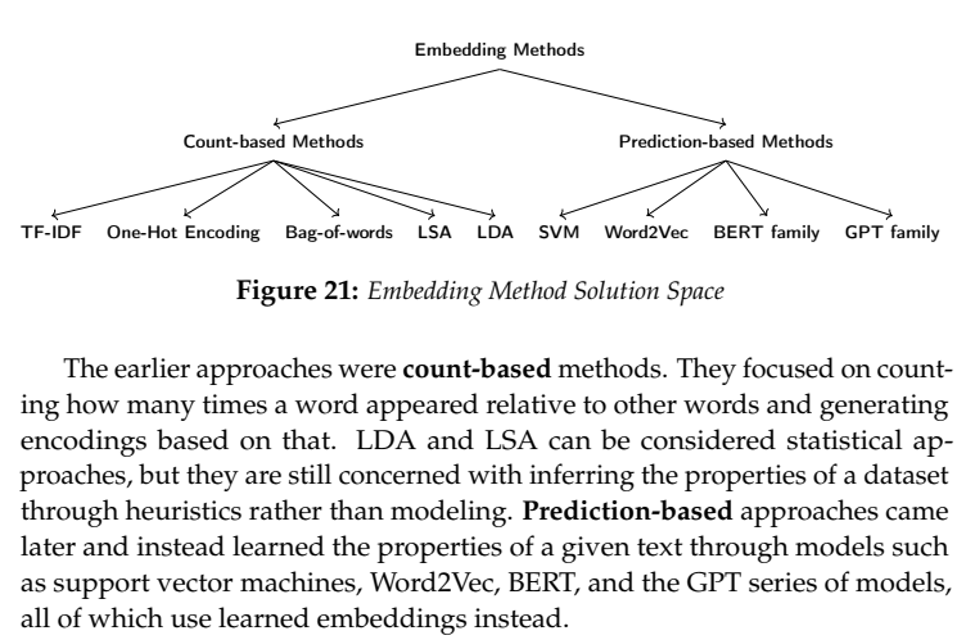

Many ways to encode embeddings

Transformers were developed to translate languages, e.g. mapping ‘sombrero’ to ‘hat.’ When trained well, they can transform data of generic type x to generic type y. There has been rapid progress in the past 20 years thanks to digital data, faster computers, and better algorithms.

RLHF

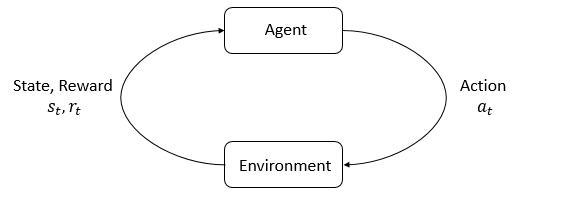

- Reinforcement Learning is a statistical paradigm to learn optimal actions for a given environment, action space and reward space

- HF refers to Human Feedback, which is generated through market research in which humans indicate which sequence completions are best

Have you ever trained a dog to sit? If yes, you provided the reward function: You get treat/praise if and only if you sit. The dog learns its “policy function” through experimentation. What is the reward function in RLHF for LLMs?

How LLMs work

Train once: Pre-train on all digital text to learn possible sequences in concept-space; train via RLHF

Then, when you input a prompt:

- Embed: Encode prompt as a sequence of points in concept-space

- Attend: Transformer layers reconsider each token’s contextual location relative to all other tokens and its own position

- ‘the bank of the river’ vs ‘the bank near the river’

- Sample: Output a probability distribution over all possible next tokens; sample one

- Append: Add the sampled token to the previous prompt

- Repeat steps 1-4 until a stopping condition is met

Sell access to customers, train a bigger model, teach the model to improve itself, take over the world.

Newer ‘reasoning’ features break this process up into sub-steps for complex prompts, and evaluate subsequence utility via narrower versions of RLHF. For example, if a prompt response includes specifying and then solving a math problem, the output can be compared against a database of correctly-solved problems, and discarded if the wrong solution was provided, and a new sequence can be generated. This usually involves generating multiple sequences and outputting the most-rewarded.

Class script

- Standardizing variables

- Iris example

- Running & graphing kmeans

- Use PCA to map the smartphone market

Wrapping up

Competition

- Run K-Means to cluster the respondents

- Give a meaningful name to each cluster

- Run PCA so that you can visualize the centroids in two dimensions

- Visualize the cluster centroids in two-dimensional space and use point sizes to indicate cluster sizes, and label the centroids, in order to map the distribution of MGT 100 students’ career ambitions

Try to make your visualization easy to understand. Test it on your friends before you submit.

Recap

- Customer needs best predict customer behavior, not demos

- Market maps depict competition, aid positioning

- K-Means augments a dataset by identifying similarities within it

- PCA compresses high-dim data into low-dim space w minimal information loss

- Embeddings represent words as points in concept-space

- Large language models are trained using RLHF to predict recursive sequences of next-words

Going further

- Book: Applied Causal Inference Powered by ML and AI

- Deep Dive into LLMs like ChatGPT by Karpathy (2025)

- Tracing the thoughts of a large language model

- K-Means Clustering: An Explorable Explainer

- Learning the k in k-means (Hammerly and Elkan 2003)